Research Overview

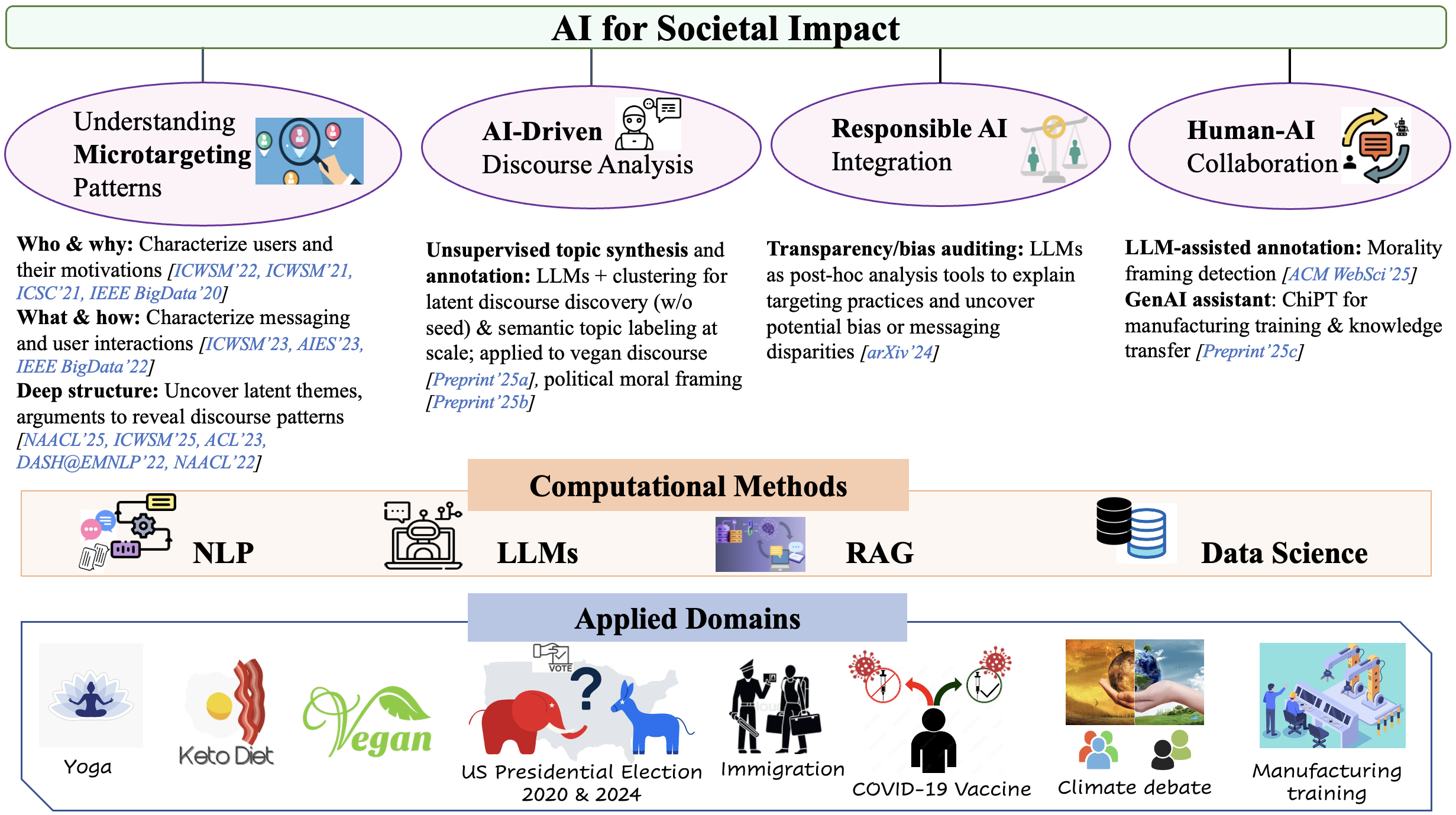

My research lies at the intersection of natural language processing (NLP) and computational social science (CSS), driven by the mission to develop scalable, explainable, interpretable, collaborative, and socially responsible artificial intelligence (AI) methods that address pressing societal challenges. As communication is increasingly mediated by digital platforms and algorithmic decision systems, information flows play a central role in influencing civic engagement, public opinion, and social cohesion. Yet these processes often remain opaque, making it difficult for researchers, policymakers, and the public to understand what is said, to whom, how, and with what disparities. Existing approaches rarely offer the transparency, scalability, and responsibility needed to understand and audit such dynamics.

I aim to address this gap by developing scalable and responsible AI methods that make complex discourse patterns visible and interpretable. My work focuses on turning large-scale textual data into actionable information tools while prioritizing transparency, explainability, and human oversight. This involves tackling challenges such as modeling nuanced themes and arguments in real-world discourse, integrating responsible AI principles into analytical workflows, and designing human–centered AI systems.

Guided by this vision, my research spans three interconnected directions. I develop computational methods powered by NLP, generative AI (GenAI), and data science for (1) communication and discourse modeling; (2) responsible AI integration; (3) building human-centered AI systems. These contributions yield both CS artifacts—datasets, models, human-in-the-loop, machine-in-the-loop, bias auditing (including post-hoc auditing of platform practices and GenAI bias evaluation) frameworks—and empirical insights grounded in real-world data. I have applied these methods to a wide range of socially significant domains, including election, climate debate, vaccine debate, immigration, lifestyle choices (yoga, keto, veganism), and AI Governance.

(1) Communication and Discourse Modeling

Understanding and Analyzing Microtargeting Patterns

We now live in a world where we can reach people directly through social media without relying on traditional media such as television, radio, and print. These platforms not only facilitate massive reach but also collect extensive user data, enabling highly targeted advertising. While microtargeting can improve content relevance, it also raises serious concerns: manipulation of user behavior, creation of echo chambers, and amplification of polarization. My research is motivated by the fact that some of these risks can be mitigated by providing transparency, identifying conflicting or harmful messaging choices, and indicating bias introduced in messaging in a nuanced way [AAAI’25]. Understanding microtargeting patterns is essential to foster healthier public discourse in the digital age and support a more cohesive society. Analyzing microtargeting and activity patterns presents major technical challenges. First, messaging strategies are dynamic, context-dependent, and often opaque. Second, user identities and motivations are typically hidden or ambiguous. To address these challenges, I develop NLP and LLM-based methods to understand and analyze microtargeting dynamics: what messages are sent, to whom, and how they are received. My research focuses on developing computational methods for:

- Characterizing users and their motivations for engaging with digital platforms, especially when identity signals are sparse or ambiguous [ICWSM’22] [ICWSM’21] [ICSC 2021] [IEEE BigData 2020] .

- Analyzing message content and user interaction, capturing how individuals engage with targeted messaging across diverse contexts [ICWSM’23] [AIES’23] [IEEE BigData’22] .

- Extracting latent thematic and argumentative structures to capture the nuances of messaging [NAACL’25] [ICWSM’25].

AI-Driven Discourse Analysis:

- Integrating LLMs with advanced clustering algorithms enhances semantic coherence, supports unsupervised annotation and enables scalable analysis of vegan discourse [ACL’26]

- Combining clustering with prompt-based labeling, LLMs iteratively build topic taxonomies and annotate moral framing in political messaging—without seed sets or domain expertise [arXiv’25]

- Unified framework for discovering latent thematic structure of climate narratives across Meta ad texts and Bluesky posts by combining semantic embeddings, HDBSCAN clustering, and LLM-based summarization and theme generation [arXiv’26a]

(2) Responsible AI Integration:

Building on my work understanding microtargeting patterns, I have a complementary line of research that audits both platform practices and AI-generated messaging, revealing how bias, fairness, and transparency emerge in algorithmic and AI-mediated communication.

- Opaque platform practices prevent external stakeholders (researchers, auditors, policymakers) from auditing microtargeting. To bridge this gap, I developed an LLM-based post-hoc auditing framework that reverse-engineers messaging practices, explains targeting choices, and surfaces demographic disparities. This framework demonstrates how ads differentially target young adults, women, and older populations in the climate debate, while fairness analysis reveals biases in model predictions, particularly affecting senior citizens and male audiences [EMNLP’25].

- My work presents the first systematic audit of age and gender bias in LLM-generated persuasive climate messaging. Using GPT-4o, Llama-3.3, and Mistral-Large-2.1, this work contrasts intrinsic versus context-driven bias, showing how male/youth-targeted messages emphasize agency and innovation, while female/senior ones stress care and tradition. Findings highlight the need for bias-aware generation pipelines and transparent auditing in socially sensitive communication [ACL’26].

(3) Human–centered AI Systems:

My research develops human–AI collaborative frameworks that position AI as partners to amplify, not replace, human expertise.

- I developed a holistic framework for social media posts, integrating stance, reason, and morality frame analysis with minimal supervision. This work studies how to model the dependencies between the different levels of analysis and incorporate human insights into the learning process [NAACL’22]. I further advanced this with an interactive framework to extract nuanced arguments [DASH @EMNLP’22] and a concept learning framework for theme discovery validated by experts [ACL’23].

- I explored how LLMs can assist human annotators in identifying morality frames in vaccination debates on social media. Findings showed that LLM-assisted workflows improve annotation quality, reduce task difficulty, lower cognitive load, and speed up the annotation process [ACM WebSci’25].

- AI Governance and Grounded Question Answering- I develop grounded question-answering systems for complex policy domains using a retrieval-augmented generation (RAG) system over the AGORA corpus of global AI policy documents, combining dense retrieval with preference-aligned generation. In this work, we show that better retrieval does not necessarily lead to better answers—stronger retrieval can even produce more confident hallucinations when key information is missing. Through expert evaluation with policy researchers, we find that while the system captures core policy themes and cites relevant evidence, it can misinterpret regulatory details, omit critical provisions, and struggle with cross-document reasoning. This work highlights a key challenge for real-world AI systems: reliability requires more than improving individual components—it demands end-to-end grounding, human alignment, and careful evaluation in high-stakes settings like AI governance [arXiv’26b].

- ChiPT: A Human-in-the-Loop GenAI Assistant for Manufacturing Training– In industry settings, we have designed a domain-specific Retrieval-Augmented Generation (RAG) system with scalable human-in-the-loop feedback that assists workers with real-time training, troubleshooting, and skill development. ChiPT leverages process manuals, expert feedback, and verified responses to enhance knowledge transfer (from retiring experts to new employees) and reduce expert workload.